I once got a 34-day free trial. Not 30 days, not ‘one month’, but thirty-four days.

It was for YNAB, a personal budgeting app. At first, it felt completely random. Most free trials hover between five and nine days, so why 34?

But when I opened the app, it all made sense.

Budgeting doesn’t deliver instant value; you need a full cycle:

- A payday

- Bills going out

- Real behavior over time

YNAB wasn’t trying to impress me in a week. They were giving me enough time to actually experience the product.

That moment really stuck with me. It made me wonder: are we, as an industry, selling ourselves short by defaulting to seven-day trials?

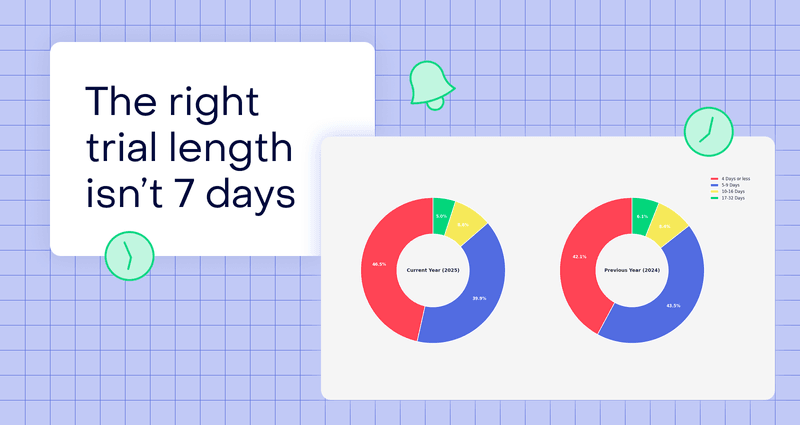

In 2024, just over half of all trials fell within the 5–9 day range, up from 2023. In 2025, trials are getting even shorter. Trials of four days or less gained share, rising to almost half (46.5%) of all trials.

While we’ve all heard “the 7-day trial is dead” generalization thrown around, that seems to be because trials are only getting shorter. So, despite all the nuance we’re about to explore, the industry default is becoming more entrenched, not less.

And that’s the problem. A seven-day trial isn’t inherently bad; it’s just rarely questioned. Trial length deserves the same level of thought as onboarding, activation, and retention.

Because at the end of the day, trial length isn’t a pricing decision. It’s a product decision.

Hannah Parvaz, Founder of Aperture, puts it:

“I’m very much in the ‘trial length is a design decision, not a default’ camp. Across multiple subscription apps, the biggest mistake I see is treating trial length as a growth lever in isolation, rather than anchoring it to time-to-value and confidence-building.”

That idea — that trial length is a product decision — sent me down a classic Daphne rabbit hole: what actually determines the right free-trial length?

Before trial length: should you even offer a trial?

Before we dive into different trial lengths, there’s a more important question to answer first:

Do you even need a trial?

Optimising trial length is meaningless if the trial itself is the wrong strategy.

I used to think trials were a must. After all, most apps across categories offer some form of trial, according to the State of Subscription Apps 2026:

Not a single category has a majority of apps without some form of trial. The closest is from Social, who have the highest no-trial strategy at 43.6%.

I read an article by David Vargas that completely changed how I think about trials — he frames it this way:

“We have to remember that a free trial is just one strategy. It relies on how ‘sticky’ the product and features are to convince users to subscribe.”

What really caught my attention was an experiment where David removed the free trial entirely.

It felt bold (my favorite type of experiment) and slightly terrifying. But the context mattered. They were seeing strong trial-to-paid conversion, yet paid acquisition wasn’t working; the cost per acquisition was too high once they factored in how many trial starts were needed to land a single paying customer.

Removing the trial nearly doubled lifetime value and unlocked paid growth.

That’s an important consideration, even if you keep a trial. Unless your trial is very short, you’ll usually end up optimising paid channels for trial starts rather than purchases. And if conversions occur outside the attribution window, ad platforms optimize for people who like starting trials, not for those who actually pay.

So the first principle is this:

Don’t touch trial length until you’re clear on whether a trial should exist at all.

Testing removal sounds scary, but if your trial isn’t pulling its weight, it’s worth questioning the assumption or focusing first on adding real value with your trial. As Dan Layfield, Founder of Subscription Index (ex-Codeacademy and Uber), puts it:

“Trials are your friend so long as they are visible, clear, and appealing to your users.”

Once you’re convinced your trial should exist, then it’s time to debunk some trial myths.

The myth: shorter trials convert better

Our instinct is simple: shorter trials create urgency, urgency drives action, and action drives conversion. Simple, right?

It’s the same line of thinking as running a 24-hour sale or telling users an item is almost sold out. It’s a massive kick up the rump.

And sure, there are situations where short trials help, especially if you want to quickly optimize paid campaigns for purchases. But the data shows it’s more nuanced.

According to RevenueCat’s State of Subscription Apps report, shorter trials come with huge day 0-1 cancellation spikes. For three-day trials, over 55% of users cancel almost immediately. Compare that to around 31% for 30-day trials.

That early cancellation doesn’t automatically mean poor intent. Often it’s driven by:

- Lack of trust

- Fear of forgetting to cancel

- And the classic: “I’ll cancel now just in case”

What’s interesting is that the longer the trial, the less cancellations. 84% of 3-day trial cancellations and 64% of 7-day trial cancellations happen between day 0–1. The risk isn’t late, it’s right at the start.

So no, shorter isn’t automatically better. But now I’ll get annoying: longer isn’t automatically smarter either.

Longer trials: when they help, and when they hurt

When people hear this, they often jump straight to: “Longer must be better.”

But blindly following aggregate data is just as dangerous as defaulting to a seven-day trial.

Yes, 17–32 day trials do show higher trial-to-paid conversion on average (a 42.5% median conversion vs. <4 day trials’ 25.5% conversion rate):

That sounds great, but we often assume trial-to-paid conversion automatically equals a better outcome, and that’s where things get tricky.

For example, I worked with a wellness app where our key activation metric was consuming two long-form content pieces within 14 days. Each piece lasted about 45 minutes and was tied to (but not exclusively) a weekly live session. That behaviour was a strong predictor of long-term retention.

So we asked the obvious question: why run a seven-day trial when our activation window is 14 days?

Time for an experiment: we A/B tested a 7-day vs 14-day trial. While the longer trial drove slightly more trial starts, fewer people converted overall.

Not because people were abusing it or needed more time, but because activation didn’t improve. People weren’t consuming more content; they were delaying. Classic procrastination. It performed worse in converting to paid, and we reverted to the 7-day trial.

That’s the dark side of long trials we’ve all experienced. A long gym trial feels painless to postpone, but a short, paid intro lesson you need to book within seven days forces action. “I’ll use it later” quietly becomes “I never really did.”

The takeaway: trial conversion is just a leading metric. What actually matters is:

- Revenue per user over time

- Retention

- Sustained engagement

There’s even a great SaaS study by Hema Yoganarasimhan, Ebrahim Barzegary, and Abhishek Pani showing that shorter trials (seven days) can outperform 30-day trials on acquisition, retention, and profitability.

That being said, a user who converts on day seven and churns on day eight is not a win.

So if you’re feeling a little confused right now, that’s fair, I’ve just dismantled both extremes (sorry!). But fear not, there is an answer: trial length only makes sense in context.

Across teams, Hannah Parvaz has seen a few consistent patterns emerge:

- “If the core value is experienced in one session, long trials often hurt paid conversion. In these cases, shorter trials (or even no trial at all, with strong reassurance) outperform because users either get it quickly or they never will.

- “If value compounds over time (habits, learning, behaviour change), a longer trial can work, but only if the onboarding actively guides users to the aha! rather than passively waiting for it to happen.

- Seven days is rarely optimal by default. It’s often too long for fast-value products and too short for slower, trust-based ones. We’ve seen better outcomes with everything from three days to 30 days, depending on how quickly users hit a meaningful milestone.”

Let’s dive deeper into that context to better understand the nuances, then we’ll outline a framework to determine the right trial length for your app.

Pricing matters for trial length

One thing that’s easy to forget when discussing trial length is how closely it’s linked to pricing and packaging.

A 14-day trial before a $5 monthly plan behaves very differently from a 14-day trial before a $120 annual commitment. In the first case, the user’s risk is low. In the second, the psychological bar is much higher: users need more proof, more confidence, or simply more time before paying feels justified.

This is why you’ll often see longer trials attached to annual plans, or trials offered only on annual pricing. It’s not about generosity, it’s about reducing perceived risk. A higher price or longer plan requires potentially a longer trial.

Headspace saw a strong conversion lift by offering a 14-day free trial with its annual plan, while offering seven days with its monthly plan. It sounds like it made users more comfortable to commit to a longer plan and helped make their annual plan more attractive.

Not only that, but with shorter subscription periods, adding a trial can almost be overkill and may even devalue your app. Weekly subscriptions, for example, already act like a trial, so you might not need another free trial on top of that. For apps that do offer a free trial with a weekly plan, they often offer only three days vs. a full week, since giving a full week free essentially devalues the subscription.

Category matters more than you think

There is no universal ‘best’ trial length (though the data would suggest leaning towards longer). When you break down trial data by category, the differences are striking:

Gaming apps overwhelmingly favor very short trials, often under four days. Longer trials invite abuse, with players optimising for completion rather than habit formation.

Photo & Video apps also skew short, because value is immediate; users can edit meaningful content quickly and see the tool’s benefits.

By contrast, Health & Fitness, Education, and Travel apps require more time for progress or focus on more serious commitments (e.g. booking a vacation). Trials of 5–9 days are far more common here, which helps explain why seven days became the industry default.

But common doesn’t mean correct. Hence my polite begging: please don’t default to seven days. The key principle is that trial length should align with activation time, not pricing norms or industry practices.

Engagement is the silent killer of long trials

Long trials often look good on the surface, but in reality, they’re much harder to manage. It’s a classic case of Instagram versus reality — the gorgeous holiday photo versus the food poisoning that left you bedbound.

A long trial with YNAB works because the burden shifts to the product; they actively guide users through workshops, live sessions, and a clear methodology.

The trial isn’t passive. Rather, it’s structured, and sadly, that’s rare.

For most apps, the question isn’t: “Can we get someone to try it once?” It’s: “Can we build a habit before they lose momentum?”

To do that, you need to:

- Drive repeat usage

- Prevent procrastination

- Continuously build perceived value

I experienced this recently with GOWOD, a mobility app offering a 14-day trial. The onboarding was strong; they started with a mobility assessment, which is great (my hip mobility definitely needs some work).

But mobility is one of those things we know we should do, but rarely prioritize. A long trial made it easy to delay. I started the trial during a busy period, thinking I’d surely find time to complete some sessions.

In reality, I didn’t manage more than two in 14 days, definitely not enough to build the habit. If I’d had a clearer goal or challenge, I might have stuck to it, e.g. committing to X sessions per week for the two weeks and measuring my mobility again afterwards.

Freemium and free trials

Freemium complicates things even further. I’ve seen apps offer such generous freemium access and long trials that I honestly start wondering if they want people to pay at all.

A study by Ling Zhang and Jiang Duan, focused on a freemium SaaS company, found that longer trials increase trial starts but don’t necessarily improve conversion. If users aren’t getting enough value to pay, trial length won’t save you. But here is where the study gets interesting: longer trials did boost delayed conversion: users who had more time to test premium features come back to convert later on.

This explains why apps like Strava and Medium deliberately offer 30-day trials despite appearing ‘simple’. They’re not optimizing for immediate conversion — they’re playing a longer game.

Take into consideration the various factors that impact a lot of freemium apps:

- The value of network effects: a lot of freemium apps depend on word of mouth for growth — and also for valuable data, e.g. Strava needs enough users in an area for a sport to segment leaderboards. Medium readers feed the algorithm on which content is engaging and which isn’t.

- Value accrues over time: in Strava’s case, I can also imagine that many of their data features (e.g. analyzing your performance) take more than a week to appear. Freemium apps tend to be ‘slow burns’, and if you’re offering premium features only behind a paywall (vs. allowing a taster of features upfront) a longer trial may add value. The same goes for Medium, where readers build up a list of writers and content they like. This also increases the switching costs over time.

So there’s an additional layer of complexity: does a longer trial create network effects or switching costs that outweigh lower immediate conversion? For platform businesses, the answer is often yes.

If you’re freemium, the real question isn’t trial length — it’s how much value must be locked behind the paywall for paying to make sense, while still keeping free users engaged enough to strengthen the ecosystem.

The psychology behind trial length

Once you zoom out, trial length is really about psychology.

Loss aversion is the big one. Ending a trial can feel like losing something you already own, especially when users have:

- Invested time

- Created data

- Built routines

This isn’t strictly about duration; it’s about investment. Photo editing apps can create loss aversion in just a few days. Games can do it even faster. Other products need longer.

That links closely to the endowment effect. The more effort users put in, the harder it feels to walk away. Think IKEA furniture: frustrating to build, yet weirdly hard to get rid of once assembled (unless, like me, you manage to break it while building).

But longer trials only work if investment feels natural and repeatable, not a one-off setup task, but something that builds over time.

On the flip side, urgency is where shorter trials shine. They force early engagement and reduce procrastination.

Every trial sits on a tension line:

- Urgency vs. habit formation

- Speed vs. depth

Usage frequency matters:

- Daily-use apps can build habits quickly.

- Weekly or monthly cadence products need longer exposure.

Now add cognitive load into the mix (slight psychology 101 here, but bear with me!):

- Complex products require learning time

- Simple products don’t — causing unnecessary friction

The simplest way to frame it is this: your trial should be long enough to form a habit, but short enough to avoid being forgotten.

A practical framework to choose your trial length

To make this actionable, I like thinking in terms of natural usage habits — a concept popularized by Phiture’s Mobile Growth Stack.

Before choosing a trial length, ask three key questions inspired by this model:

- How often must users engage to experience value? Consider the balance of usage frequency and number of engagement moments. For example, with one app, helping users make five new friends vs. just one hugely improved retention.

- When does the first real aha! moment happen? This isn’t the end goal (like running a marathon with Strava), it’s the point where users see clear progress, like completing the first few workouts, or achieving some measurable milestone.

- What behavior must exist before paying makes sense? Identify the minimum meaningful behaviour that signals the product is valuable and worth paying for. This is the threshold where the trial converts into a paying experience.

Even then, I can’t recommend enough that you should run a test: measure both the short and long-term impact of any trial duration change. That’s why thinking through the questions above first is so important.

If you’re still unsure, here are some rough guidelines (not rules!) to help:

- 3–7 days: simple utilities, games, and quick-value apps

- 7–14 days: daily-use apps where time is needed for habit formation

- 14–30 days: weekly cadence tools, like a project management app, where users need 2–3 cycles to see value

- 30+ days: complex analytics or reporting tools, where onboarding, guidance, and meaningful data collection take time

Advanced thinking: not all users need the same trial

If you have a big enough user base, you can take trial experimentation further. Different users may benefit from different trial lengths:

- Fast activators vs. slow starters

- Freemium-activated users vs. cold starts

- Monthly vs. annual plans (some teams only offer trials on annual plans)

- Trials as part of win-back flows

The caveat: complexity only helps if you execute it well. Over-engineering monetization often creates confusion rather than clarity.

It’s also worth noting that there are App Store limitations around trial length and setup. To get fancy like YNAB, you may need a web-based trial (luckily, web-to-app is all the rage these days). Just ensure the user experience matches what users see in-app—otherwise, Apple may reject your app.

Alternatively, if you want to test extended trials for specific users, you could use promotional offers on iOS. On Android, you can defer a subscription’s expiry via code.

This kind of segmentation is typically only viable once you have strong activation signals and enough volume to avoid muddying your results.

Beyond the 7-day default

So please don’t default to seven days. While it isn’t a bad starting point for most apps, there are plenty of cases where shorter or longer trials could have a more meaningful impact.

First, decide whether a trial should exist at all, and ensure it effectively activates users. Be bold, like David, and test removing it entirely. If you test trial length, use the right measures of success: trial starts, and trial-to-paid conversion rates aren’t the full scorecard — activation, retention, and revenue are.

Next, understand your users’ psychology. Do they need urgency or time to invest? Does loss aversion play a role? Context matters, as gaming thrives on short trials, while Health & Fitness apps often default to longer ones for good reason.At the end of the day, the right trial length isn’t the one that converts the most users. It’s the one that creates customers who actually stick around.